The creativity used in establishing models is fascinating, but we aren’t going that deep. Instead, we will speak broadly about concepts of interactions with AI chat and give you an idea why there is a need for so many models and modes to exist.

Zero-Shot Prompting

This is most likely what you are doing when you provide a prompt. You don’t need to provide any additional context, you just ask for what you want. With this approach you are relying entirely on the previous training of the model to come up with an answer. Think of it as simply probing the model to see what it knows.

These Large Language Models (LLMs) are trained with so much information that they perform surprisingly well even without any additional training or creative prompting.

Of course, the responses can be improved by engineering prompts. You may have already seen lots of tutorials and examples for creative prompt engineering. Those details can go a long way, but we can often get more out of our interactions by providing additional context.

Few-Shot Prompt Optimizations

Responses can be substantially improved using a very basic approach to training. Supplying a few examples of your own prompt completions helps guide the AI toward the desired output (or just one example, for one-shot prompting). This is an extremely simple example, but take note of how the response mimics the provided samples in the following few-shot prompt:

Doing exactly this is possibly the biggest trick of ChatGPT’s user interface. While you as the user are only typing in one prompt at a time, the system is providing your entire chat history with each submission; your conversation becomes a few-shot optimization for your prompt. The AI learns how you ask questions, and in some way can infer your preferred answers.

However, there are some downsides to blindly providing the entire history of a conversation. You may get a lot of noise in the response. That noise includes non-specific questions that you asked (have you ever had to re-ask a question?), and perhaps prompt responses that you didn’t like are included (were you ever unhappy with a response?). Another pitfall is if the conversation extends past the model’s input limit the beginning of the conversation will be lost. You don’t have any control over the prompt completions that are provided, so the answers may not be as specific as you want.

The ideal way to work around these concerns is to intentionally provide your own carefully crafted prompt context. This can be actual answers from the AI, your own curated data, or answers from the system that you have taken and further refined.

In any case the point is that you ideally want to very explicitly state “These are the types of responses I want” and the more specific and accurate your data the more useful the responses will be.

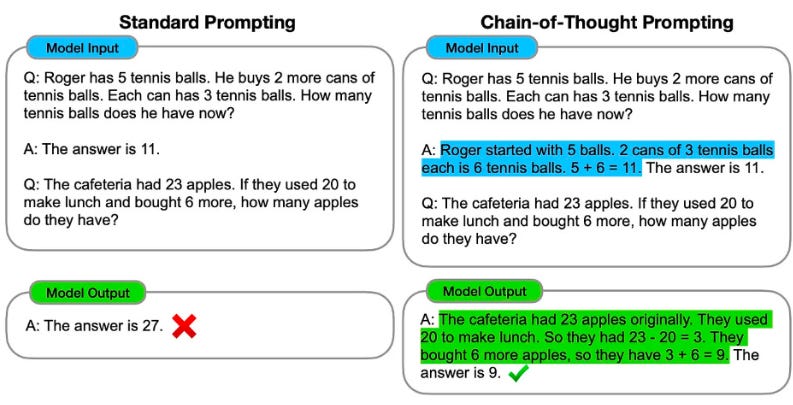

Chain Of Thought (CoT) Prompting

CoT prompting is a way of asking the AI to lay out it’s chain of thinking so we can understand why it generated a specific answer.1 CoT is a few-shot technique where you provide samples related to reasoning.

Being intentional about the focus doesn’t just suggest how you would like the AI to respond, but further encourages the AI to explain the answers it has provided. We could consider this to be a type of few-shot prompting, but it is important to call out separately because it addresses reasoning, which is something that these systems are typically not very good at.

Model Fine-Tuning

Another way to think about fine-tuning is many-shot prompting. Some suggest that as few as 100 examples can be enough for model training, while others recommend 1,000 or more.

Whether you want to fine tune or use a few-shot approach will depend on a number of factors, including how much of the data you are going to re-use, how often you are going to re-use the data, and the related costs of different approaches.

Aside from the size, the biggest difference between few-shot prompting and fine-tuning is that prompting needs to be repeated with every single prompt, whereas after fine-tuning a model is ready to use without having to repeat examples.

Model Training

Fine-tuning is much more limited than the vast amounts of data that models are trained on. We could go deep into options here, but the important bit is that training is fairly involved and tends to require a lot of compute power.2

Prompt engineering comes along after all of this is complete and is only providing the tiniest bit of direction to request that the model produce a specific response.

The type of training that the model has had, along with any additional learnings or encouragement you add, is ultimately what guides the generation of a specific response.

How you train the model, what additional learnings you provide, and what type of response you are looking for must all be considered when attempting to produce output from one of these AI systems.

Reinforcement Learning from Human Feedback (RLHF)

While AI is capable of learning a lot through observation, it is also possible for us to provide direct feedback.

RLHF is just a fancy term for “Telling the AI whether or not we liked the information they produced.”

Model Creation

Let’s briefly cover creation of the baseline models themselves. Training is the submission of data, but the model is the brains that ingests and makes sense of the data.

We have many models because we're not always sure how the models will work, or if they will work at all. We repeat this process of trying different approaches until we see responses that made sense... but even good models sometimes produce responses that don't make sense.3

The best models impress us, and occasionally we are awed by abilities we did not anticipate.4 OpenAI's ChatGPT (as of March 2023) was probably the first to get the attention of the masses, but there are many other capable models out there.

Some models are trained with many billions or even trillions of parameters, and many may have capabilities that we have not even fully understood. These really big (large) models make sense of human language… and that’s why they are called Large Language Models, or LLM’s for short.

Although LLMs are not the only type of AI model or even the most interesting, they are the ones primarily making the news today.5

The Takeaway

AI can ‘learn’ in many different ways. AI is currently very capable of doing a lot without training, but your specific needs may require fine-tuning a model with your own data. Significant undertakings or specific use cases may benefit from training or even creating a model from scratch. Luckily, most of us can benefit by adding just a little encouragement within a prompt.

With an understanding of training methodologies and our abilities to influence AI systems, we can better harness the capabilities of AI models to unlock their full potential.

Compute power is becoming a bottleneck for developing AI, December 2022

https://techmonitor.ai/technology/ai-and-automation/chatgpt-ai-compute-power

NVIDIA NeMo Guardrails are a new open-source software solution to prevent A.I. 'hallucination'

https://www.shacknews.com/article/135225/nvidia-nemo-guardrails-ai-software

Emergent Abilities of Large Language Models

https://www.assemblyai.com/blog/emergent-abilities-of-large-language-models/

OpenAI’s CEO Says the Age of Giant AI Models Is Already Over

https://www.wired.com/story/openai-ceo-sam-altman-the-age-of-giant-ai-models-is-already-over/