Although some are calling for AI labs to pause research and development of AI systems1, I believe we are long past an opportunity for improvement through reflection. There is no benefit to closing the stable doors after the horse has ran out of the barn. Read: the pause’s report on AI policymaking.

I don’t have a problem with the thinking, but the belief that such a request has potential to meaningfully alter outcomes is misplaced. We are beyond letter writing. Politely asking competitors to stop innovating in the name of broad safety simply is not going to be effective, especially when many will do as they please with or without us.2 3

As a concept, I like the idea of all of us coming to agreement on some of the most urgent, impactful, and complex conversations of our time.

As a realist, it’s no secret that major players have been aggressively deploying unprecedented amounts of cash and resources in an effort to place themselves among the first to claim exclusive ownership of impossibly effective solutions.4

A new divide between haves and have-nots is emerging. We must face related inevitabilities with appropriate and effective responses. We must make decisions for a world in which super intelligent systems exist and have been developed outside of the bounds of safe and sane training. We are well beyond our ability to broadly influence or contain AI development. It is a misguided use of our time to focus on limiting the general population’s access these tools; we must do our best to ensure that those who wield these systems do so responsibly.5

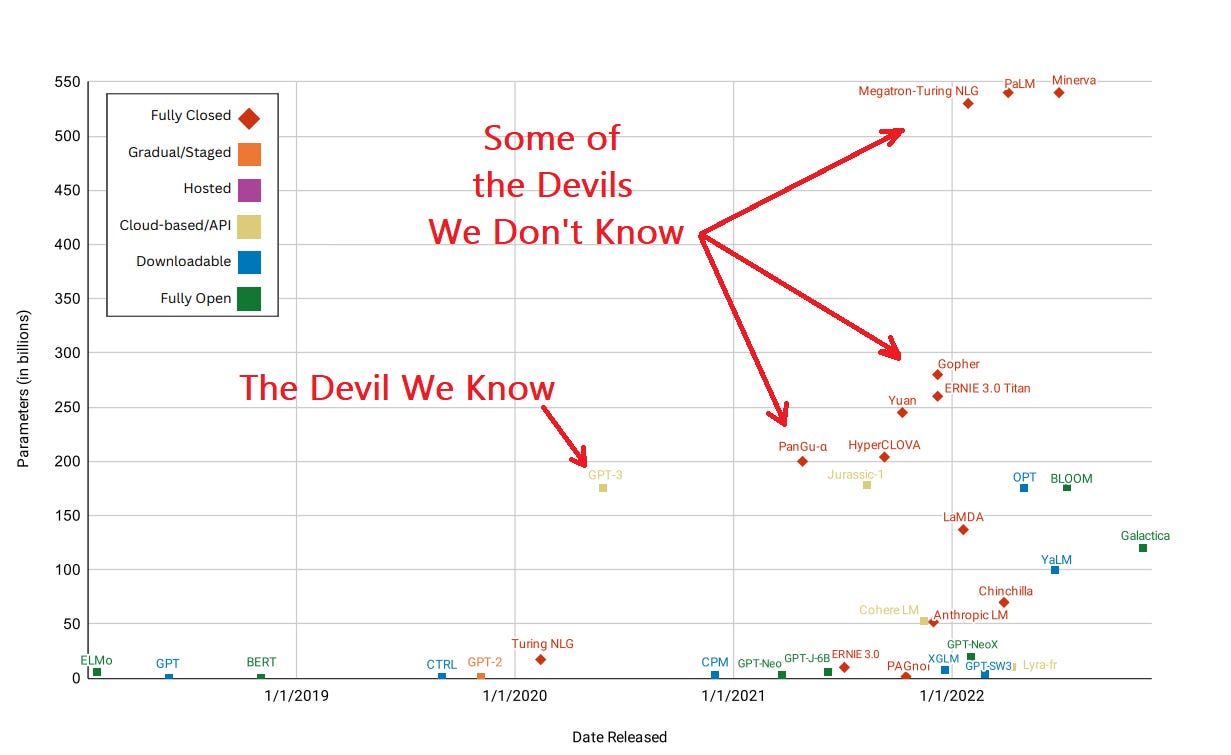

OpenAI needs to be celebrated for democratizing access to AI. The release of ChatGPT (GPT-4 in particular) was bold and unexpected. Big leaps which shock the consciousness will inevitably be accompanied by cries to ensure the technology is rolled out responsibly, but ChatGPT is not the big technological advance that we need to fear. What makes ChatGPT special is not it’s superiority, but it’s broad availability. I am not suggesting that OpenAI is undeserving of criticisms, but if the concern is capabilities (and it should be), then we need to shift our attention to the emergent systems which the general population will never have the privilege of benefit.6

Let’s say we did somehow manage to implement a great pause, with broad and sweeping rules which were backed by real capacity to compel action. Given how these things tend to play out, it is not a stretch to imagine a lawyer defending a company that continued development despite these well crafted rules:

“My clients' groundbreaking research in artificial intelligence was initially focused on models less powerful than GPT-4 and not specifically designed as Large Language Models (LLMs). It was only upon completion that they realized the true power of their creation. We respectfully argue that the recent legislation restricting LLMs more powerful than GPT-4 is overly broad and far-reaching, inadvertently affecting innovations with fundamentally different intentions.“

- High power ChatGPT lawyer, probably

To be fair, enterprises who have invested substantial time and resources in such things deserve rules which are clear and unambiguous. Unfortunately, I am not optimistic that the collective “we” will arrive at a destination within the time required.

All that said, with the reach of such technologies being absolute, it is inevitable that not all developments will be above board and performed by well-regulated entities. The present landscape is not limited to ethical actors and those prioritizing adherence to a collective goal, but also companies who have an open commitment to act primarily in the best interests of shareholders, governments with questionable intentions and poor track records of working together, and organized crime with more sinister agendas. We face a problem that we are unable to policy-make our way out of. If we are to address these issues in any meaningful way, we must reconsider how these developments will affect not only businesses and individuals, but our broader societies and perhaps even humanity as a whole.

These challenges are profound, and many are far more impactful than the relatively inconsequential effects of plebeians tinkering with a shiny new toy. Do I fear abuse of ChatGPT? Absolutely. However, the codification of a hierarchy which places private and profit seeking entities in a position of relatively permanent and incontestable position of power is a far greater threat.

AI brings a new era which demands new approaches. Existing frameworks are perhaps too slow and ineffectual to respond adequately. Policymakers are ill equipped to solve these problems. The letter does well to identify that urgency is necessary, but as we drift beyond this event horizon we need more than attention if we are to prepare for the future; immediate action is imperative.

Open Letter asking for a “pause the training of AI systems more powerful than GPT-4“

https://futureoflife.org/open-letter/pause-giant-ai-experiments/

China’s AI Edging Ahead Of U.S.

https://www.forbes.com/sites/craigsmith/2023/01/14/chinas-ai-implementation-is-edging-ahead-of-the-us/?sh=637a7a092dfb

Global AI revenues expected to exceed $500 billion in 2023

https://www.business-standard.com/article/technology/global-artificial-intelligence-spending-to-reach-434-bn-in-2022-report-122022000195_1.html

Yoshua Bengio on the Pause

https://www.youtube.com/watch?v=I5xsDMJMdwo

Risk related to the release of AI systems, Irene Solaiman | Hugging Face

https://arxiv.org/pdf/2302.04844.pdf